Bend, Not Shift

Bend, Not Shift

The missing kind of learning in the age of scaling laws

The strangest thing about neural scaling laws isn't that models get better as they get bigger. Of course they do. The strange thing is how smoothly they get better.

Across enormous ranges of model size, data, and compute, loss falls along a simple power law. On a log-log plot it becomes a line. Add an order of magnitude of compute, and the model improves by a predictable fraction. Add another, and it improves by another predictable fraction. The process is astonishingly reliable. It's also strangely mechanical.

That straightness should bother us. If intelligence were recursively discovering better abstractions, why would each new order of magnitude buy roughly the same fractional gain as the last? Why doesn't the curve bend?

Networks do learn abstractions. The sharper question is whether those abstractions make future learning cheaper. At the level of aggregate pretraining curves, they don't seem to — not enough to change the macroscopic regime.

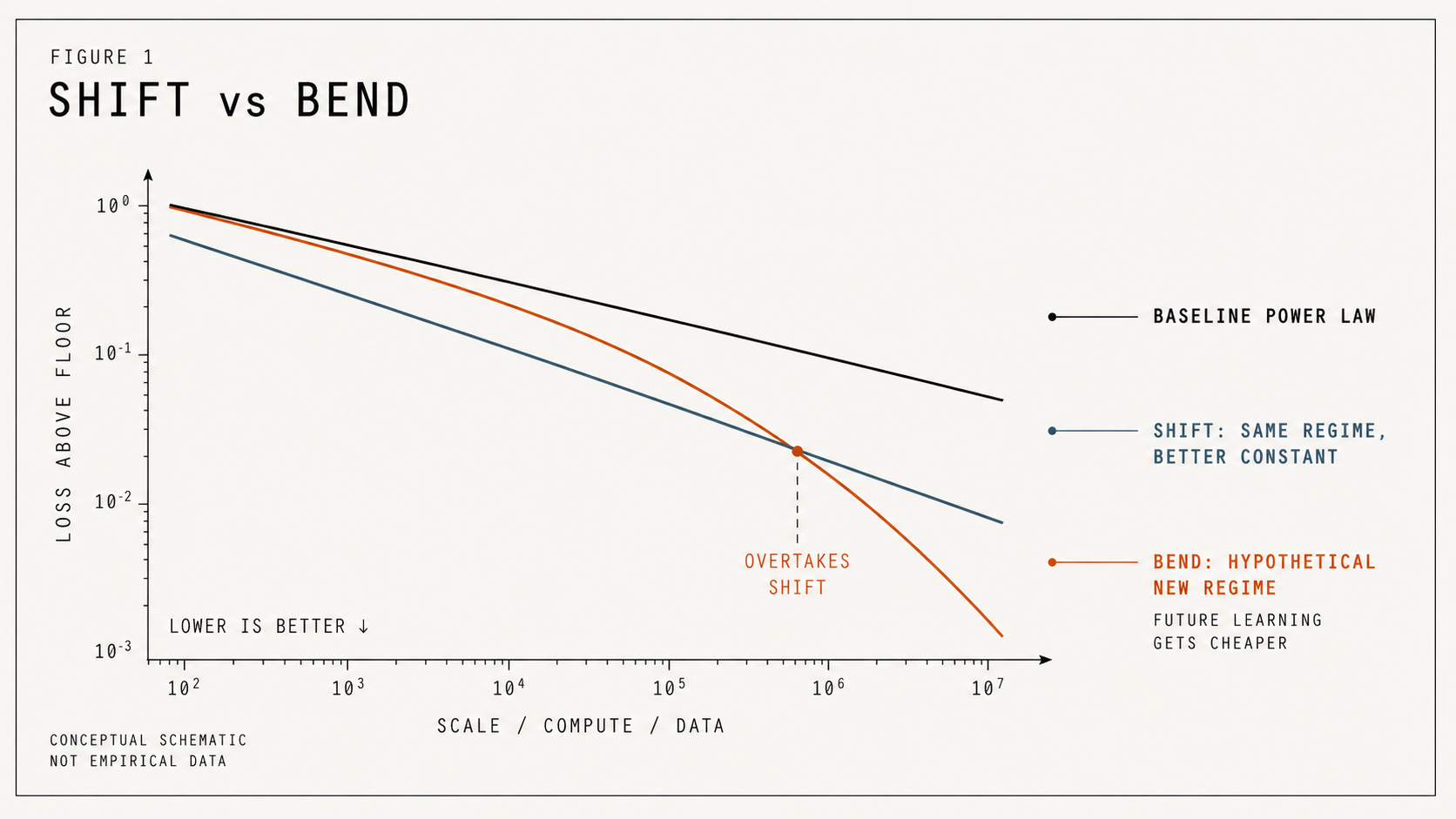

A better model shifts the line. A different kind of learner bends it.

Figure 1 — Shift vs Bend. Better engineering shifts the scaling curve. A different kind of learner bends it.

Start with the curve.

1. A power law is what blind sampling looks like

The standard form of neural scaling is

$ L(C)-L_\infty = aC^{-\alpha} $

where $L(C)$ is loss at compute scale $C$, $L_\infty$ is an irreducible floor, and $\alpha$ is the scaling exponent.

Kaplan et al. made this central to modern language modeling, showing loss scaled predictably with model size, dataset size, and compute across many orders of magnitude. Hestness et al. had already found similar power-law behavior across machine translation, language modeling, image processing, and speech recognition. The breadth matters: power-law scaling isn't a quirk of one domain, and it isn't cleanly explained by any single distribution like Zipfian word frequencies. It shows up wherever neural learners face a large effective space of structure to discover.

Scale works. But scale works slowly. If $\alpha = 0.05$, then 10× more compute multiplies the remaining loss by

$ 10^{-0.05} \approx 0.89 $

— about an 11% reduction in the gap to the floor. To halve that gap takes roughly

$ 2^{1/0.05} \approx 1{,}000{,}000 $

times more compute. A million-fold increase for one halving. That can still be economically enormous, but it isn't the curve you'd expect from a system that keeps discovering abstractions that make future learning dramatically cheaper. It's the curve you'd expect from a system that keeps pulling information out of a big space at a roughly steady rate.

You can produce this curve with no understanding at all.

Sample $n$ points uniformly inside a $d$-dimensional box and ask how close the nearest sample gets to the origin. The volume of a radius-$r$ ball scales like $r^d$, so to land one sample inside it you need roughly

$ nr^d \approx 1, \qquad r \approx n^{-1/d}. $

A power law. No architecture, no gradient descent, no representation learning — just blind sampling and order statistics.

HyperLogLog is the same idea even more starkly. A hash with $k$ leading zero bits occurs with probability $2^{-k}$, so seeing one takes about $2^k$ samples. The system improves by waiting for rare events. It remembers the best one so far, but it never uses that memory to change where future samples come from.

A scaling law from no understanding at allFigure 2 — A scaling law from no understanding at all. The dumb sampler keeps drawing random points and remembers the closest one. No architecture, no gradient descent, no learned features — and the best-so-far distance still falls along a clean power law.

Neural networks aren't dumb samplers. But the widget is a warning: a smooth power law on its own doesn't tell you whether a system is learning structure or just keeping at it. From the outside, very different mechanisms can produce the same curve. And what keeps the dumb sampler stuck in its power law is simple: future samples still come from the same distribution.

So the question isn't whether the model learns. It does. It's whether what it learns changes what it samples next.

2. Local abstractions don't bend the curve

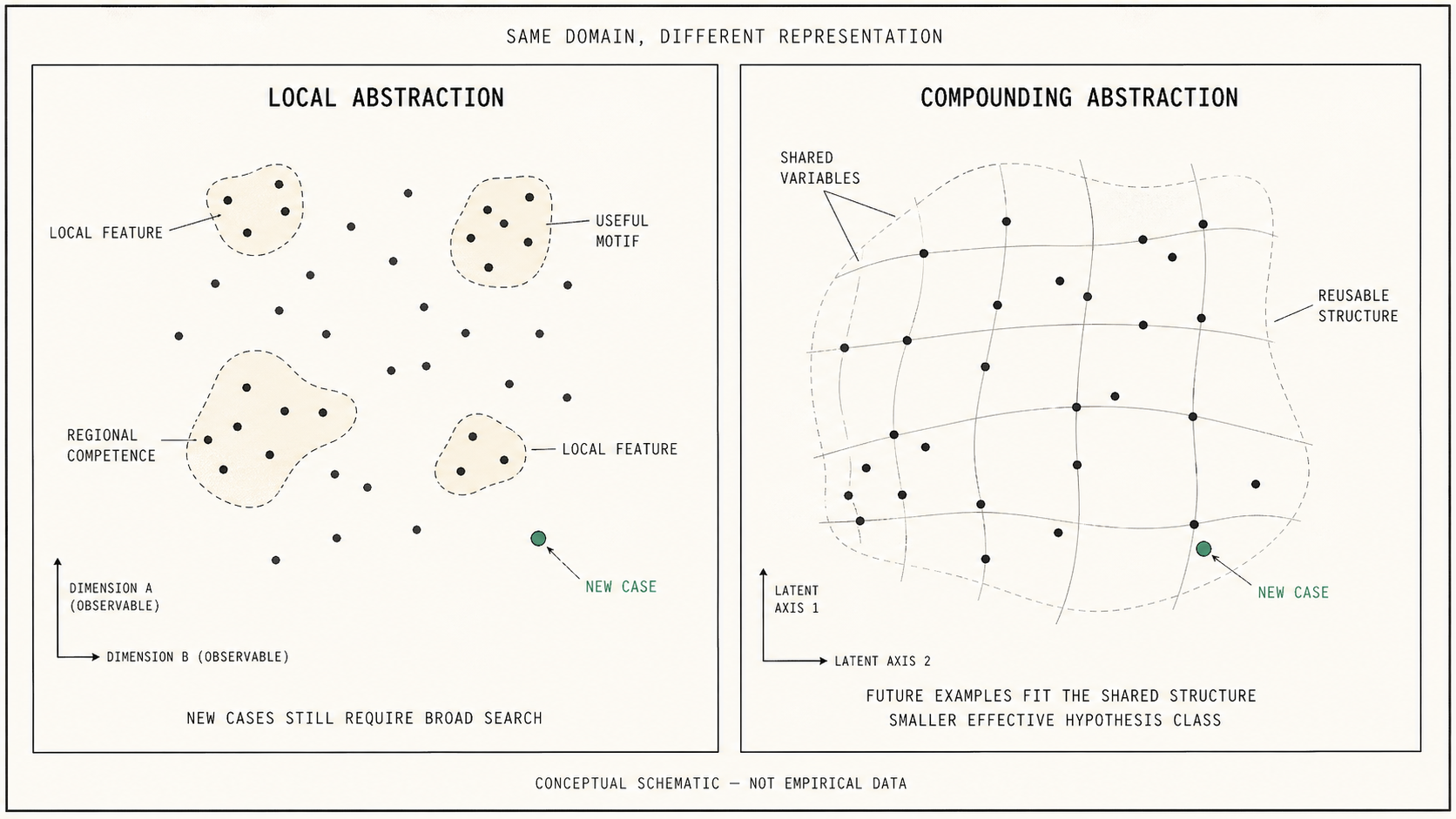

Networks clearly learn — they form features, circuits, skills, representations. But "the model learns abstractions" hides the move that matters.

A local abstraction helps the model on some region of the data. A compounding abstraction makes future learning easier by narrowing the hypothesis class — by giving the learner a coordinate system in which many future examples become cheap.

Networks learn lots of local abstractions while the global curve stays a power law. Michaud et al.'s quantization model points at how that works: if many discrete skills are learned in frequency order, and their frequencies are heavy-tailed, aggregate loss can fall smoothly even though many local transitions are happening underneath. The curve looks continuous; the underneath isn't.

So "power law means no abstraction" is too crude. The stronger claim is that current networks learn abstractions, but those abstractions don't compound enough to change the macroscopic regime. They improve performance. They don't make the next layer of learning dramatically cheaper.

The missing quantity is abstraction yield: how much learning one example, skill, or representation reduces the cost of learning related cases later.

Figure 3 — Local Abstraction vs Compounding Abstraction. Local abstractions improve performance in patches. Compounding abstractions reveal a shared structure that makes future learning cheaper.

But the curve alone can't tell you which one is happening underneath.

3. What the curve can't tell you

Many different mechanisms can produce power laws: manifold approximation, Zipf-distributed skill frequencies, percolation-like transitions, kernel spectra, order statistics, mixtures of many small learning events. A model can form circuits, reuse features, discover local skills, and reorganize representations — and if the aggregate compute–loss relationship still looks the same, it belongs to the same empirical regime. Arguing about which mechanism is "really" producing the curve is a bit like arguing whether a message arrived in Morse, UTF-8, or smoke signals: the mechanisms differ, but the coarse fact is the rate at which information gets through.

That gives us three distinct kinds of progress.

An intercept gain shifts the curve down — same loss, less compute. A slope gain improves the exponent but stays inside the same power-law family. A bend is something else: the learner has entered a different regime, and additional compute now buys more than the previous trend predicted, because the learner has changed the effective problem it's sampling from.

Most modern progress is shift-like. Kaplan showed many architectural details mattered less than size, data, and compute across the studied ranges. Hestness observed that improvements typically shifted error curves more than they changed exponents. That isn't "architecture doesn't matter." It's that within those regimes, most wins were efficiency wins, not regime changes.

But the curve isn't sacred.

4. The crack: change where you sample, change the curve

Sorscher et al. is the empirical hinge. Their data-pruning paper doesn't just show that smaller datasets can sometimes beat larger ones. It shows that if examples are ranked by a sufficiently good pruning metric, dataset-size scaling can break beyond the usual power law and, in the idealized theory, approach exponential scaling in the pruned dataset size. They report empirical signatures of this on ResNets trained on CIFAR-10, SVHN, ImageNet, and related vision settings.

Go back to the dumb sampler. Filtering data is, in the simplest reading, sampling from a smaller region of the same space. The sampler is still amnesiac — it doesn't remember anything from one draw to the next — but it's been told to ignore part of the space. Same shape of curve. Lower constants. Faster nearest-distance to anything inside the restricted region.

Restricted sampling, lower curveFigure 4 — Restricted sampling, lower curve. The orange curve is the sampler restricted to the highlighted wedge; the gray curve is the same sampler with no restriction. Pull the slider to narrow the sector. Same exponent, lower intercept. The curve isn't sacred — but the sampler isn't smart, either.

That's the conceptual crack. The curve looked inevitable because we were sampling from the whole world. Restrict the world the sampler sees, and the curve drops. Sorscher's exponential-scaling result requires more than a static restriction — the pruning has to keep finding informative residue at scale — but the first-order intuition is the same: the curve depends on where the sampler is looking, not just on how many samples were drawn.

It also reveals the hard part. You only beat the power law if you know which examples matter — which sector is the right one to look at. That moves the problem from the student to the teacher. To bend the curve, you need more than a bigger model. You need a better way of deciding what the model should learn next.

5. The sampler should learn too

Modern pretraining is no longer raw IID internet soup. Frontier systems use filtering, deduplication, mixtures, synthetic data, annealing, post-training, preference optimization, and reinforcement learning. But most pipelines still aren't stateful teachers. They don't ask, at each stage:

- What abstraction has the model just formed?

- What examples would test it?

- What examples would extend it?

- What examples are now redundant because the abstraction already compresses them?

- What examples are too hard now but useful after one more prerequisite?

This isn't a new field from scratch. Curriculum learning, active learning, hard-example mining, data pruning, model-aware data selection, and reinforcement learning all orbit parts of this idea. The useful move is to connect them to a sharper empirical test: does the training process merely shift the curve, or does it help the learner discover structure that makes future learning cheaper?

The field is already moving. DoReMi treats pretraining mixture weights as something to optimize rather than accept. Data Mixing Laws and RegMix use small runs to predict better mixtures for larger models. MATES makes data selection depend on the evolving state of the model during pretraining. OLMo-style late-stage curriculum and annealing show that the same data can matter differently depending on when the model sees it.

Data is no longer passive fuel. It's a control surface.

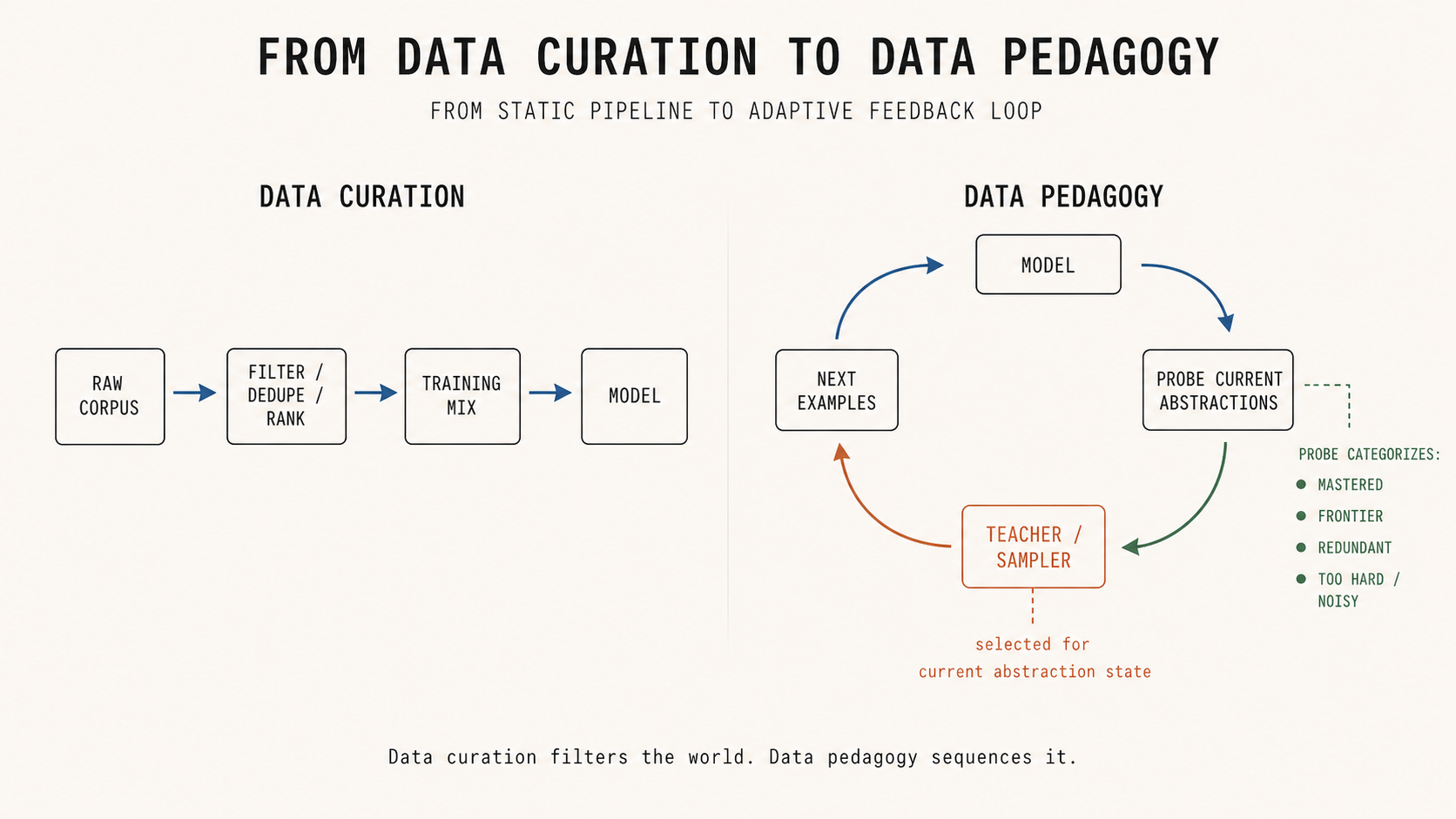

6. From curation to pedagogy

Once data is a control surface, there are three increasingly powerful ways to use it.

- Data curation asks: what data belongs in the dataset?

- Learned data valuation asks: which examples are valuable for learning?

- Data pedagogy asks: given what the model currently understands, what should it see next?

Human education works the third way. We don't teach calculus by sampling uniformly from all mathematical text. We build prerequisites, introduce notation, choose examples that expose structure, vary one factor at a time, test transfer, and then use the newly formed abstraction to compress the next layer. A teacher doesn't just sample the world; a teacher sequences it. LLM pretraining usually asks the model to infer the curriculum from the same stream it's supposed to learn from — which is asking the model to be its own curriculum designer.

The sampler from §4 is a way to see why each rung is more powerful than the last. Curation hand-codes a sector — the human says "this part of the world is good." Learned valuation discovers the sector — the model figures out which examples are generally worth keeping. DataRater (Calian et al.) is the canonical example: instead of hand-writing a quality heuristic, it meta-learns a scoring model by backpropagating through each example's effect on held-out learning efficiency. The teacher itself becomes something we train, not something we design.

But most learned data selection still treats value as a property of the example: a source, a difficulty score, an influence estimate, a mixture weight. Data value isn't intrinsic to an example. It's a relation between an example, a learner, and a moment in training. A static rater can learn which examples are useful in general. A stateful teacher can learn which examples are useful now.

That's the third rung — the sector that moves with the learner. After the model forms a new abstraction, the most useful examples shift. The teacher should shift with them: probe what skills and abstractions have formed, identify mastered and frontier regions, select examples that extend those abstractions, keep enough broad replay to preserve coverage, and repeat. The strongest version may not even keep teacher and learner separate. If the same representation that's learning from the data also helps decide what to sample next, the sampler becomes sensitive to the learner's emerging abstractions. The learner gets better at learning from raw data; the teacher gets better at sampling from it.

Figure 5 — From Data Curation to Data Pedagogy. Curation filters the world. Pedagogy sequences it.

The model should learn. The sampler should learn too.

7. What would count as a real breakthrough?

The hard part is measuring which examples are pedagogically useful. Loss is easy to measure. Abstraction yield is not.

A high-loss example might be useful, or it might be noise. An easy example might be redundant, or it might be the missing bridge that stabilizes a new concept. A rare example might be unimportant, or it might unlock a whole class.

The target isn't "train on the hardest examples." It's to find examples with high abstraction yield — examples that reduce loss across a cluster, improve transfer to held-out variations of the same rule, connect previously separate representation regions, clarify a concept boundary, or turn many memorized cases into one compressed rule.

A real breakthrough wouldn't just produce a better benchmark number. It would show that after discovering an abstraction, the model learns related cases faster.

The clean experiment isn't to start with frontier LLMs. Start where the true abstractions are known: modular arithmetic, compositional grammars, symbolic programs, sparse polynomials, small games. Fit the early IID learning curve. Then introduce a teacher whose curriculum is conditioned on what the student has actually formed, not on the true abstraction known in advance. A real bend wouldn't be a one-time jump; it would be a sustained trajectory that beats the early power-law extrapolation because related cases got cheaper after structure was discovered.

What matters isn't whether final accuracy improves. It's whether learning one abstraction reduces the sample or compute required to learn a family of related cases. After structure is found, does the next region get cheaper?

If the model just keeps improving at the same rate, we have a shift.

If learning one abstraction makes a family of future examples cheaper, we have the beginning of compounding.

A fixed sampler gives us power-law refinement.

A learned sampler gives us curriculum.

A sampler that moves with the learner is what compounding would look like.

That's the difference between training a model and training a learner. And the curve, finally, would not be smooth.

Papers this essay is thinking with

Kaplan et al., "Scaling Laws for Neural Language Models". Important because it made the straightness of language-model scaling curves practically unavoidable.

Hestness et al., "Deep Learning Scaling is Predictable, Empirically". Important because it showed similar power-law behavior across several domains and observed that model improvements often shift error curves more than change exponents.

Michaud et al., "The Quantization Model of Neural Scaling". Useful because it shows how many discrete learned skills can aggregate into a smooth power law.

Sorscher et al., "Beyond Neural Scaling Laws: Beating Power Law Scaling via Data Pruning". Crucial because it demonstrates that better data selection can, in theory, break beyond power-law dataset scaling and potentially approach exponential scaling; it also reports better-than-power-law scaling in practice on ResNets trained on CIFAR-10, SVHN, and ImageNet.

Calian et al., "DataRater: Meta-Learned Dataset Curation". Important because it shows that data value can itself be learned with meta-gradients against held-out training efficiency, rather than specified through hand-written quality heuristics.

DoReMi, Data Mixing Laws, RegMix, MATES, and OLMo-style curriculum work. Useful because they show the field moving from data as passive fuel toward data as an optimized control surface.

Grokking work. Important as a counterexample to any simplistic claim that neural networks never discover structure. They can; the open question is whether they can do so generally enough to change frontier-scale learning regimes.